Get 40 Hours Free Developer Trial

As loneliness, stress, and emotional burnout continue to rise, more people are turning to AI tools for support. What once felt niche is now becoming part of how people look for comfort and guidance. In early 2026, the APA reported that AI companion apps surged 700% between 2022 and mid-2025, underscoring how quickly this space is growing.

That’s exactly why mental health chatbot development is drawing so much attention right now. Businesses and healthcare platforms are looking for better ways to provide support that feels more accessible, immediate, and consistently available. But for that support to truly help people, it needs to be designed with clear boundaries, strong safety measures, and genuine care from the very beginning.

The chatbot can make support feel more reachable, but it also needs to be safe, responsible, and honest about what it can and cannot do. The real challenge is not building something that talks. It is building something people can trust.

Why Mental Health Chatbots are Growing Fast in 2026

Mental health support still feels out of reach for a lot of people. Long wait times, high costs, and the pressure of opening up to someone new often make that first step harder than it should be. That is one of the biggest reasons AI chatbots for mental health are growing so quickly. People want support that feels easier to access, less intimidating, and available when they actually need it.

At the same time, businesses and healthcare platforms are under pressure to offer better digital support experiences. A well-designed AI-powered chatbot for mental health can help people start conversations, stay engaged between sessions, and access structured guidance without added friction. That is why the demand for a thoughtful AI chatbot is rising. It is not just about convenience anymore. It is about making support feel more reachable and more realistic for everyday life.

AI Chatbots for Mental Health: Key Benefits for Users and Providers

A well-designed chatbot can make support feel easier to reach and simpler to manage. With the right development approach, businesses can create more helpful and timely care experiences. That is why healthcare brands are now considering an AI consulting company as a partner to strengthen their digital support journey.

Easier Access

A mental health chatbot can make getting support feel a bit less difficult. It provides a non-threatening way to start conversations for people who experience uncertainty or anxiety or who need time to prepare for in-person dialogue.

Quick Support

People facing mental health difficulties experience their problems at any time. A chatbot provides immediate assistance through its ability to deliver fast responses, which include basic check-ins and urgent support guidance.

Less Pressure

Opening up personal information creates a major commitment. Chatbots make that first interaction feel more private and less intense, which can help people feel more comfortable sharing what they are going through.

Better Engagement

Consistent support works better when it feels reliable. Regular follow-ups, reminders, and small check-ins can help users stay connected instead of dropping off after one conversation.

Easier Workflows

For providers, chatbots can manage the more repetitive parts of the process, like intake questions, basic screening, and common queries. That provides teams with additional resources to devote their efforts toward assisting individuals who require dedicated human assistance.

Scalable Care

As more people look for support, care teams need systems that can keep up. A well-built chatbot helps extend support in a more organized way without making the experience feel rushed or impersonal.

Use Cases and Limitations

A mental health chatbot can be genuinely useful when it is built for the right role. It is not there to replace therapy or act like a professional. Its real value is in making support feel easier to reach, less overwhelming to start, and more consistent between care touchpoints, including Telemedicine experiences.

Where They Help

A professional chatbot can help with simple check-ins, mood tracking, journaling prompts, guided exercises, and small moments of support when someone is having a hard day. It can also help people who are not ready to speak to a therapist yet but still want a safe and easy first step.

Where They Should Not

A chatbot should not try to be a therapist. It should not handle crises by itself, give users the feeling that they no longer need human care, or speak with more authority than it actually has. Once it starts doing that, it stops being a helpful support tool and starts becoming something that can easily do more harm than good.

What Matters Most

The features are usually the simplest ones. They stay within clear limits, offer support without overstepping, and make it easy to bring in a real person when needed. Good mental health chatbot development services are not about making AI sound more powerful. They are about creating something safe, useful, and trustworthy in real-life situations.

Rule-Based vs Hybrid vs AI-Powered Mental Health Chatbots

Each chatbot’s design requires a distinct operational approach. The chatbot for mental health requires specific system designs that determine the required levels of structured elements, adaptive components, and user operational authority.

| Approach | Best fit | Why teams choose it | Things to consider |

|---|---|---|---|

| Rule-Based Chatbot | Intake, screening, guided check-ins, and FAQs | Follows a clear path, which makes the experience easier to test and manage. It works well when strong and predictable responses are needed. | It can feel limited when users say something unexpected or want a more natural back-and-forth. |

| AI-Powered Chatbot | Open-ended support, reflection prompts, and flexible conversations | It allows the experience to feel more natural and better suited to different user responses. | It needs stronger safeguards, because more flexibility can also create more risk in sensitive situations. |

| Hybrid Chatbot | Products that need both guided flows and natural conversations | It gives teams a balanced setup by combining structured support with more flexible responses where they make sense. | It takes more planning because teams need to be very clear about where structure ends and where flexibility should begin. |

Essential Features of a Safe and Effective Chatbot

The best product in this market delivers clear performance, safe operation, and trusted reliability. The development needs proper guardrails that protect users while providing them with essential support. Trust and safety measures take precedence above all when it comes to using AI technology for medical coding purposes.

Clear Boundaries

An effective mental health chatbot should be upfront about what it can and cannot do. The system needs to provide help and guidance, as well as informational resources that professional treatment should not replace. Users experience less confusion when different boundaries exist, which helps to establish trust between them and the system.

Crisis Escalation

Certain conversations should never stay with the chatbot. High-risk situations require systems to implement clear escalation paths that direct emergencies to emergency resources and human support.

Privacy First

Mental health discussions require strict privacy protections because they involve highly sensitive personal information. A HIPAA-compliant healthcare system establishes detailed procedures that guide organizations through processes such as obtaining user consent, managing access, and storing sensitive data throughout the product lifecycle.

Human Handoff

The most effective experiences know when a real person needs to step in. The chatbot becomes more beneficial for users when it establishes a seamless transition to either a therapist, support team, or care coordinator.

Simple Guidance

Not every reply needs to feel deep or overly conversational. A well-designed AI chatbot achieves its most useful operational performance through basic user support functions, which include check-ins, journaling, coping exercises, and structured next steps.

Ongoing Review

A chatbot should never be treated like a one-time launch. The system needs ongoing testing together with documented material improvements and operational assessments so it can maintain secure operations and deliver user benefits while adapting to changing mental health compliance standards and user well-being requirements.

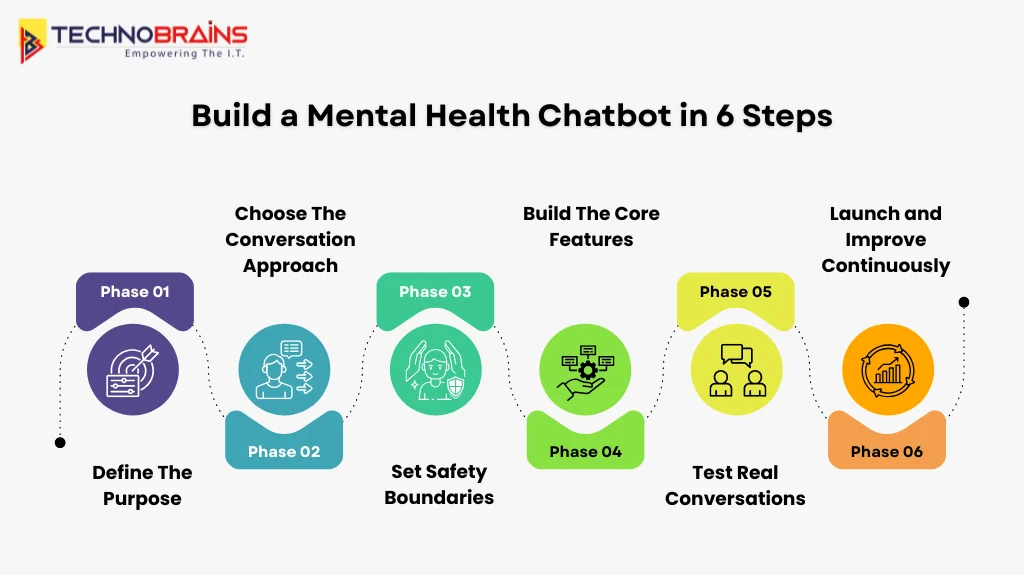

6 Easy Steps to Build a Mental Health Chatbot

Developing responsible mental health chatbots starts with a clear purpose. The team aims to develop a product that users can operate with complete trust. The process requires developers to establish definite limits, which include appropriate security protocols and proper methods.

Define the Role

Start by being honest about what the chatbot is there to do. It may support check-ins, journaling, guided exercises, or intake, but it should not try to cover every mental health need in one place.

Set Clear Limits

Users should always understand what the chatbot can help with and where human care is still needed. Those limits make the experience feel safer, clearer, and much more trustworthy.

Build Safety Early

Safety should never be treated like something to fix later. High-risk language, distress signals, and crisis moments need a clear response path from the beginning, with escalation built into the flow.

Keep Human Support Close

The best systems know when a real person needs to step in. Good AI integration makes that handoff smoother, whether the next step is a therapist, care team member, or support specialist.

Test Real Conversations

It is not enough to test ideal scenarios. A chatbot also needs to handle silence, confusion, emotional moments, and unexpected responses in a way that still feels safe and supportive.

Keep Improving

Improvement begins when a product enters its initial launch stage. A useful chatbot requires continuous assessment, together with fresh content additions and monitoring activities to maintain its relevance, responsible behavior, and user assistance capabilities.

Privacy, Compliance, and Ethical Considerations

When a chatbot becomes part of someone’s support process, privacy and ethics cannot be treated like a side task. People need secure boundaries that allow them to share their most intimate feelings with others while maintaining complete protection of their confidential details.

- Users should know what information is being collected and how it will be used.

- Sensitive conversations need strong protection.

- The chatbot should be clear about its role and never come across as a real therapist.

- Serious situations should move to human support quickly and without confusion.

- Strong chatbot compliance also shows up in everyday choices, like honest language, clear limits, safe design, and proper AI testing & performance validation.

Getting this right is not just about following rules. It is about building something people can trust when they are already feeling vulnerable. The safer and clearer the experience feels, the more useful it becomes.

Real-World Mental Health Chatbot Examples

The chatbot space is still evolving, but a few products have already shaped how people think about digital support. Each one takes a slightly different approach, which is exactly what makes them worth looking at. For brands exploring developing a chatbot for their needs, these examples show that a strong product is not about doing everything. It is about knowing its role and doing that well.

Woebot

Woebot is often seen as a more structured and guided experience. It focuses on short, supportive conversations and keeps its role very clear, which makes the overall experience feel more grounded and easier to trust.

Wysa

Wysa is known for offering everyday emotional support in a way that feels approachable and easy to use. It also shows how a chatbot can fit into bigger wellness and care journeys without feeling too clinical or too complicated.

Youper

Youper stands out for being more direct and intentional in how it supports users. What makes it interesting is not just the experience itself, but how clearly it communicates its limits, which is a big part of building trust in this space.

Tess

Tess is one of the earlier examples of chat-based mental health support used in more organized care settings. It helped show that these tools can support broader programs and institutions, not just individual users looking for one-on-one interaction.

Cost to Develop a Healthcare Chatbot

The cost of building a mental health chatbot depends less on the chat itself and more on everything around it. Once you add safety logic, secure data handling, integrations, and ongoing monitoring, the budget changes quickly. Appinventiv’s recent guide frames this clearly, with basic MVPs starting around $40,000–$80,000 and more advanced products moving into much higher ranges as scope and safeguards grow.

- A simpler product costs less – A basic chatbot with guided check-ins and limited flows is much lighter than a product built for long-term engagement, escalation, and care coordination.

- Safety increases the budget – Once you build in crisis handling, stronger review layers, and ongoing monitoring, the work becomes much more than standard product development.

- Compliance adds real complexity – If the goal is a HIPAA-compliant environment, teams need to account for privacy controls, access rules, secure storage, and auditability. That naturally adds time and cost.

- Integrations push pricing up – Connecting the chatbot with scheduling systems, care platforms, CRM tools, or Telemedicine workflows makes the build more involved and more expensive.

- Launch is not the end of the cost – Ongoing updates and support are part of what make the product dependable in real use.

The biggest mistake is budgeting for a chatbot demo and expecting a real care product. In this space, the cost reflects how safe, reliable, and usable the experience needs to be.

Real Life Use Cases

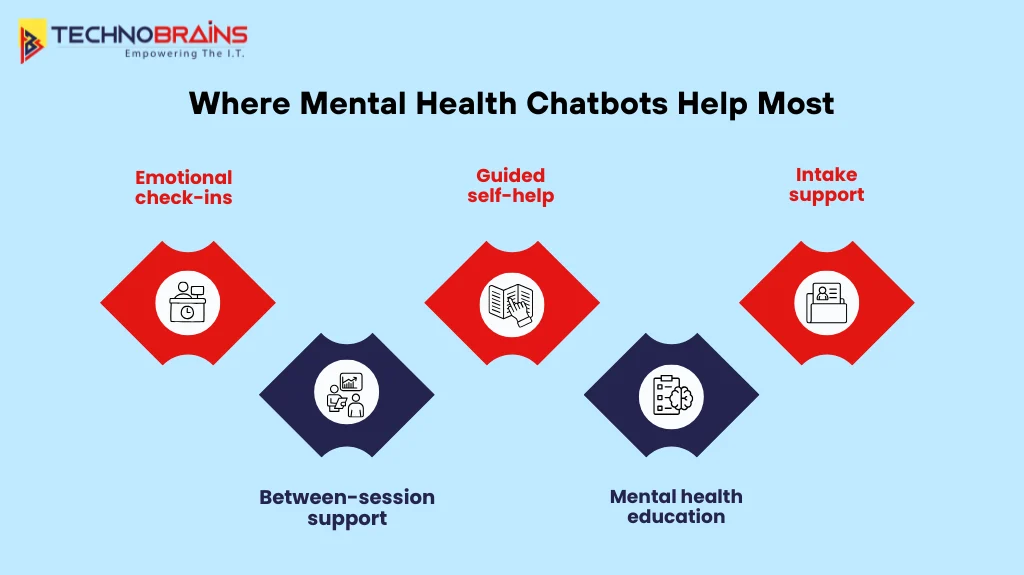

A mental health chatbot works best when it is built around clear, everyday support needs. The goal is not to make it handle everything. The goal is to focus on moments where it can genuinely help users and make the overall care experience feel easier, more consistent, and more accessible.

Daily emotional check-ins and mood tracking

This is one of the most practical ways a chatbot can help. It gives users a simple space to check in with how they are feeling, notice patterns over time, and stay a little more aware of their emotional state without feeling pressured.

Guided self-help for everyday struggles

A chatbot can offer users small, structured tools they can use for their own support needs. Users can access journaling prompts, grounding exercises, breathing techniques, and basic coping steps, which help them manage challenging days.

Intake support before human care starts

Chatbots enable providers to gather essential information through their interaction with customers, which explains why customers reach out to their digital health platforms. The most effective chatbot app provides users with time savings while delivering focused content for their subsequent discussion.

Continuous support between sessions

Support should not disappear between appointments. The chatbot system enables users to stay connected through its follow-up notifications and minute check-ins, which create a continuous care experience.

Simplified mental health learning

Some individuals prefer to postpone their need for extensive assistance until they require it. They require information that can be easily comprehended without difficulty. Through its accessible interface, the chatbot enables users to study stress, burnout, anxiety, and health methods.

Conclusion

Chatbots for mental health are no longer just a trend. They are becoming a practical way to make support feel easier to reach, easier to continue, and less overwhelming to start. The strongest ones stand out because they feel clear, safe, and genuinely helpful. Some products work best for emotional check-ins, some for guided self-help, and others for intake or ongoing engagement. The right fit depends on the support journey you want to create.

If you are planning to build an AI-enabled chatbot, the goal should not be to replace human care. It should be to create something trustworthy, accessible, and useful when people need support the most. Get started today with TechnoBrains to build a smarter, more reliable healthcare software solution.

Metal Health Chatbot Development FAQs

It is a digital tool that helps users with things like emotional check-ins, guided self-help, mood tracking, and simple support conversations. It can be a helpful part of a broader healthcare system, but it should not be treated as a replacement for therapy or crisis care.

The chatbots work through a mix of conversation design, response systems, and safety checks. Some follow a fixed flow, while others allow more natural interactions based on how they are built.

That depends on how simple or advanced the product needs to be. A basic version can take much less time, while a more complete solution with safety layers, integrations, and features like HL7 integration will usually take longer to build properly.

This can vary greatly depending on the complexity level. A simple chatbot will be significantly cheaper than developing a chatbot with better privacy features, customized workflows, health care features, and so on.

No, it will not replace a therapist. A chatbot can assist with regular check-ins and provide some level of guidance, but it is not a replacement for a therapist. A therapist is still needed for diagnosis, treatment, crisis intervention, and other more meaningful levels of care.